Evals for Everyone: A Deep Dive

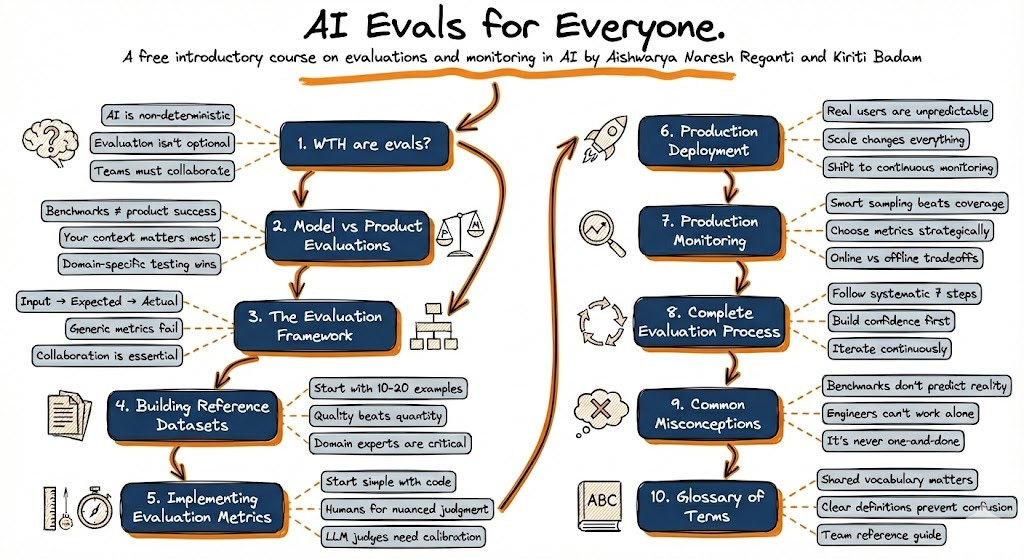

We ran a 3-part series called "Evals for Everyone." Here's what we covered, condensed into one post.

We just wrapped our “Evals for Everyone” Lightning Lesson series on Maven, with over 2,000 signups from practitioners at Google, Meta, Amazon, Anthropic, and about 90 other companies. I’ve been thinking about evals for a long time (Kiriti and I literally wrote an O’Reilly Radar piece arguing the word itself does more harm than good), and this series was our attempt to lay out the full picture in three focused sessions.

What follows is a recap of each session, the frameworks we shared, and the parts that generated the most interesting Q&A from the audience.

Why evals confuse everyone

Before we get into the sessions: a quick note on why we built this series.

Product managers hear “evals” and think behavior definitions. Model vendors hear it and think benchmark scores. Data teams hear it and think annotation guidelines. Engineers hear it and think automated tests. Everyone’s using the same word to describe different parts of the same process, and nobody’s talking about the full picture.

That’s the gap we wanted to close.

Design Evals Users Will Trust

The first session was about pre-deployment evals, and we started with the thing that trips up the most teams: confusing model evaluation with product evaluation.

Benchmarks tell you what a model can do. Product evaluation tells you if it should be used.

Consider this scenario. Model A scores 92% on MMLU. Model B scores 87%. Model A looks like the obvious pick. But if you’re building an insurance claims processor, that 5% gap on a general knowledge benchmark tells you exactly nothing about which model will handle your specific edge cases better. Benchmarks don’t know your domain, they don’t know your requirements, and they don’t know your users.

The framework we introduced is simple. For any AI system, there are three things:

Input (not just the user query, but conversation history, retrieved documents, system prompts, business rules, API results)

Expected output (defined collaboratively by subject matter experts, product, AND engineering, not just one group)

Actual output (what your system actually produces)

Your job is to close the gap between expected and actual. The entire evals process is built around that.

The part that surprised the most people: you should start from observed failures, not from a predetermined checklist of metrics. Most teams pick metrics first (hallucination rate, helpfulness, coherence) and then evaluate against them. We argue the opposite. Run your system on 50 real examples first. Watch where it breaks. Then build metrics around those failure patterns.

50 examples is enough to start. Even 10 to 20 is fine. So many teams today work with zero curated examples. Having any reference data at all puts you ahead of most.

We also spent time on why generic metric names are misleading. “Helpfulness” in customer service means solving problems quickly without over-explaining. In education, it means being more thorough and patient. In medical information, it means accuracy above all else, where hallucination becomes the metric that actually matters. The name of the metric doesn’t tell you much. The rubric behind it is everything.

Scale Evals Without the Chaos

If Session 1 asked “does my system work as intended?”, Session 2 flipped the question to “how are we doing overall, and where should we focus?”

Because real users surprise you every day. They come up with use cases you never anticipated. And even a 95% success rate (genuinely good for a production AI application) still means 500 problematic conversations daily at 10,000 interactions. You don’t know the stakes of each one.

We introduced an Impact-Reliability-Cost framework for choosing which metrics to actually run at scale. Unlike traditional unit tests, AI evals cost real money. Every LLM-judge call is an API call. You have to be strategic:

Impact: Does this metric reveal actionable problems?

Reliability: LLM judges are typically 70-90% calibrated with humans. Factor that error in, don’t pretend it doesn’t exist.

Cost: Code-based metrics cost essentially nothing. LLM-judge metrics require you to estimate token costs at your volume.

Plot your metrics on an impact vs. cost graph. Some are must-haves (high impact, low cost). Some are strategic investments. And some you’re better off dropping entirely. 3 to 5 actionable metrics can beat 20 ignored ones.

The other big idea from this session was the Discovery Loop. Your pre-deployment evals will catch the problems you anticipated. Production surfaces problems you couldn’t predict. User signals (editing AI outputs, abandoning conversations, contacting support) are your early warning system. When those signals go red but your eval scores look green, your evaluation framework has blind spots. That’s not a system problem. That’s a measurement problem.

One more thing we covered that tends to surprise people: you should retire evals. An eval that was useful for GitHub Copilot a year ago might be completely irrelevant today because the models improved. Evals can become stale. Don’t just keep adding measurement. Prune what’s no longer giving you signal.

Evals in Action with Arize

For the final session, we brought in Laurie Voss (Head of DevRel at Arize, co-founder of npm) to go fully hands-on. The entire session was live code and working demos using Arize Phoenix.

Laurie’s framing for the session: “Traces tell you what happened, evals tell you whether it was any good.” Just as logs record what your server did, traces record what your AI did. Every agent call, tool call, and LLM invocation with inputs and outputs at each step.

The session covered two complementary approaches:

Code evals are deterministic Python functions. They run in milliseconds, cost nothing, and give unambiguous answers. Checking if output is valid JSON? Write a code eval. Checking for forbidden phrases like “as an AI language model”? Code eval. People reach for LLM judges too quickly. Just because output varies doesn’t always mean you need an AI judge to evaluate it.

LLM-as-a-judge evals handle the questions code can’t answer: Is this factually accurate? Did it stay faithful to the source? Is the tone appropriate? Most real systems use both approaches together.

One of the most useful ideas from Laurie’s session was about what makes LLM judges actually useful as a debugging tool:

“The explanation is what makes evals into a debugging tool and not just a scoreboard.”

An LLM judge that says “incorrect” without explaining why doesn’t give you much to work with. The explanation tells you what was wrong, what was missing, what the agent should have done differently. When you see the same explanation across 50 different traces, you know you have a systematic problem, not an edge case. And when the judge itself is wrong, the explanation tells you which criteria it misapplied, so you can improve the judge. It works in both directions.

Writing good eval rubrics

We spent a good chunk of time on the anatomy of a good custom eval prompt. Five components:

Role definition: Give the judge domain context about what agent it’s evaluating

Explicit criteria: List exactly what makes a response correct and what makes it incorrect (don’t just say “a good response”)

Data presentation: Use clear delimiters, label each piece clearly

Labeled examples: One correct and one incorrect response dramatically improves consistency

Constrained output: Binary (correct/incorrect), not a 10-point scale

That last one generated a lot of discussion. A 10-point scale sounds more precise, but it’s actually noisier. Even as a human, the difference between a 6 and a 7 is hard to pin down. Binary or at most three categories (correct/partial/incorrect) gives you cleaner signal.

If you’re starting from zero

If you sat through all three sessions and had to distill it into a starting point:

Start with 50 examples. Real inputs your system will see, paired with what good output looks like. Get your subject matter experts, product people, and engineers in the same room to define “good.” That collaborative definition is half the work.

Run your system on those examples and watch it fail. Don’t pick metrics from a menu. Let the failures tell you what to measure.

Build human baselines before LLM judges. An LLM judge without a human baseline is just “a fancy way of being wrong at scale.” Start with humans, calibrate your judges against those humans, then scale.

Use code evals for everything you can. Save LLM judges for the genuinely subjective questions.

Then iterate. Run evals, read the explanations, fix the pattern, run again. That cycle is how you move from “I think it’s working” to “I can prove it’s working.”

Going deeper

This series was a condensed version of our free AI Evals for Everyone course on GitHub, which comes with video lectures and a certificate.

If you want to go further and build evaluation systems with your own product data, our Problem-First AI course on Maven covers this end-to-end. Over 2,000 engineers and PMs from companies like Meta, Google, and Anthropic have taken it, and evals is one of the modules people keep telling us changed how they ship AI products. Our next cohort starts April 25.

Recordings from the series:

See you at the next one :)

Aish